Meta is now facing one of the most consequential child-safety trials yet brought against a major social media company, and a New Mexico jury is deciding whether the company misled families about how safe its platforms were for children.

The case centers on Facebook, Instagram, and WhatsApp. New Mexico argues that Meta failed to adequately protect minors from sexual exploitation and other harms while publicly presenting its platforms as safer than they actually were. Jury deliberations began on Monday, March 23, 2026, after roughly six weeks of testimony in Santa Fe.

What New Mexico Is Alleging

At the heart of the case is a claim that Meta violated New Mexico’s Unfair Practices Act by making misleading statements about child safety. State lawyers say the company did not simply struggle to police bad behavior online. They argue Meta knew its platforms exposed children to serious risks, including sexual exploitation, compulsive use, and mental health harms, while continuing to reassure the public that its safeguards were strong.

The state’s case was fueled in part by an undercover investigation launched by New Mexico Attorney General Raúl Torrez in 2023. Investigators posed as minors on Meta platforms and said they were able to encounter sexually explicit content and contacts from adults with disturbing ease. Prosecutors have used that evidence to argue that Meta’s enforcement systems were not nearly as effective as the company suggested.

New Mexico also argues that Meta’s own internal research showed the company understood some of the harms tied to teen use, including addictive design issues and exposure to dangerous material. According to the state, that makes the company’s public messaging about safety especially problematic.

Meta’s Defense

Meta says the state is distorting the facts.

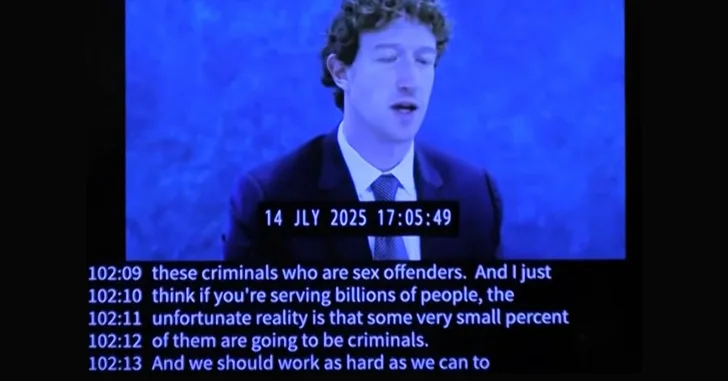

The company argues that it has invested heavily in safety systems, moderation teams, age-related protections, and tools designed to combat child sexual abuse material. Its lawyers told jurors that no online platform can prevent every harmful interaction and that Meta has never claimed perfection. Meta also says it has been transparent that safety enforcement is difficult and ongoing.

In other words, Meta’s defense is that the existence of predators or harmful content on its platforms does not automatically prove deception or unlawful conduct by the company itself.

Why This Trial Matters

This is a major test case.

Social media companies have faced a growing wave of lawsuits over child safety, teen mental health, and addictive product design. But very few of these cases have actually made it to a jury. That is what makes this trial different. It is not just another lawsuit filed for headlines. It is one of the first major cases where jurors are being asked to weigh internal research, public statements, platform design choices, and evidence from undercover investigations all in one courtroom.

There is also real money on the line. AP reported that New Mexico is seeking heavy civil penalties that could top $2 billion, depending on how violations are counted. That figure alone makes this case worth watching closely, even for investors who do not usually follow legal fights over tech policy.

The case could get even more serious after the jury phase. AP reported that the judge may later decide a separate public nuisance claim, which could open the door to court-ordered remediation or funding for corrective programs.

Why Investors Should Care

For Meta shareholders, the immediate issue is not whether this one case alone will derail the company. Meta is enormous, profitable, and financially strong enough to absorb even a large penalty. The bigger risk is cumulative.

If Meta loses, the verdict could embolden more states, school districts, and private plaintiffs to press harder in similar cases. It could also strengthen the political case for stricter rules around youth access, platform design, parental controls, algorithmic recommendations, and age verification. That matters because regulatory pressure can increase compliance costs, limit engagement tactics, and create reputational damage that weighs on long-term valuation multiples.

There is also a broader read-through for the social media sector. This case adds to the argument that online platforms may eventually face a legal reckoning similar to what other industries faced when internal knowledge of harm became central evidence in court. AP noted that some observers see these social media cases as early-stage parallels to litigation campaigns once mounted against tobacco and opioid companies.

That does not mean Meta is about to face the same outcome. But it does mean investors should stop treating child-safety litigation as background noise. These cases are getting further into the system, and juries are now being asked to decide whether platform companies crossed a legal line.

The Bottom Line

The New Mexico trial is now in the hands of a jury, and the central question is straightforward: did Meta merely fall short in trying to police an enormous digital ecosystem, or did it mislead families and users about how safe its platforms really were for children?

That distinction matters a lot. If jurors side with New Mexico, the verdict could become one of the most important legal blows yet against a major social media company over child safety. It would also send a message to investors that litigation and regulatory risk around youth protection is no longer theoretical. It is here, it is expensive, and it is moving closer to the core of the social media business model.